For over a decade, organizations have been heavily investing in GIS tools and capturing petabytes of geospatial data. These massive acquisitions of datasets show that geospatial technologies are very powerful. However, powerful doesn’t necessarily equate to useful – particularly for members who aren’t on the geospatial team.

Typically, initiatives to make those datasets more accessible are often deployed with good intent, but require significant technical expertise, time, and resources. Additionally, without clear indicators and metrics for success to determine the usefulness of the data to the end-user, which Project Managers (PMs) care about, these initiatives tend to fall short of expectation.

They fall short not because of their technical capability, but oftentimes because of a lack of understanding of their own geospatial stack, not knowing its capabilities and an understanding of other solutions that work well in their geospatial ecosystem. Oftentimes there aren’t any real in-depth analysis of an organization’s entire geospatial architecture prior to implementing new tools.

Geospatial datasets live in silos.

Within a geospatial stack, geospatial data is often siloed and not shared as a data source for different teams within an organization. When in reality, geospatial data should be easily accessible to allow for Business Intelligence tools, product analytics, and marketing solutions to plug into and make sense of the data.

In a small organization, it’s easy to run queries and new analyses on your own siloed data. This fails at scale for a simple reason: team members that use the data (Geospatial Analysts, Solutions Specialists), aren’t the ones managing the data (Engineers). These teams have different priorities and might even be in different departments.

Requesting access to specific datasets and waiting weeks to get data leads to stagnation – not innovation.

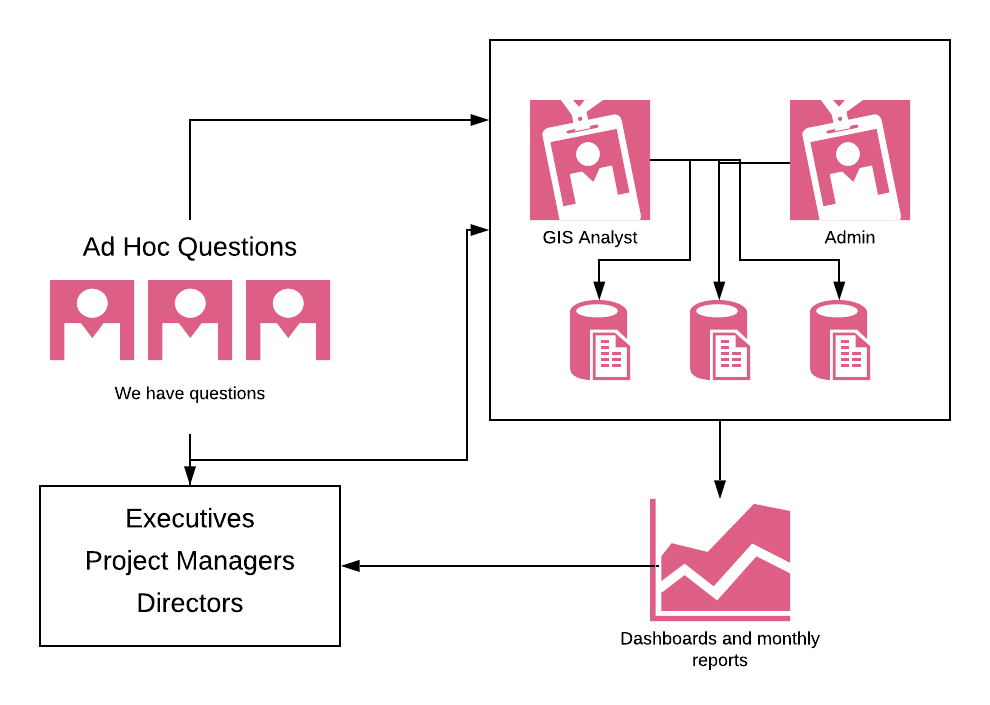

Additionally, when geospatial data is siloed, you typically have to go through one person to get any answers. Whether it’s the geospatial analysts or a data expert – due to their unique technical skills – they become the gatekeepers of geospatial data. And, even if you have the technical skills to do it, it’s time-consuming at best, making it difficult to get answers in a reasonable timeframe.

Queries to a data set require some technical skills in SQL or some kind of coding chops to query the right information, create a report that adds context to the data, and then provide an answer to the question. In short, anybody outside of the geospatial team with low technical skills, struggle to get answers to their questions quickly (or at all), and experts, who are hired internally to solve data challenges, are inundated with little requests from various teams and can’t focus their energy on bigger, more impactful initiatives that can move the needle.

It’s an issue that perpetuates itself, bottlenecking innovation and growth within an organization.

With a geospatial analytics solution, it eliminates the need of a data queue, empowering and answering the burning questions that teams have about their technology usage and their users. With analytics, not only can you get persistent metrics and dashboards that stay current, but it also gives PMs the opportunity to segment information on a granular basis to answer questions that require a deeper, exploratory analysis.

Incorporating an analytics solution into your geospatial stack

At Sparkgeo, we work with several organizations that are leveraging geospatial analytics solutions to help guide their geospatial software implementations or to satisfy their stakeholders’ needs for data insights across an organization.

Need a geospatial partner? Come say hi to our team.